2024-04-22 - Back to (movement) basics

After last week failure in modifying "blindly" the character rig to playback saved animation clips, let's approach the problem differently, ready to accept that it might be time to dig into the SDK code and understand it deeply.

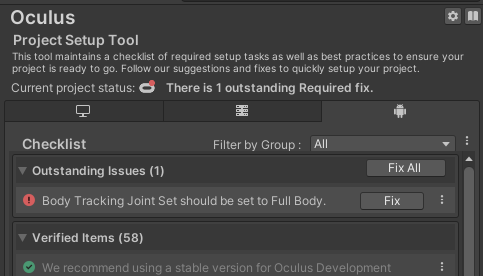

I quickly checked week #14 to see which sample was my starting point, and noticed that, in that occasion, I refused to let the Project Setup Tool fix the outstanding issue "Body Tracking Joint Set should be set to Full Body".

And in those same days, I was wondering why the generative legs in the samples didn't work.

Silly me.

I let the Project Setup Tool apply the fix, and guess what? The legs started working.

Not that I need them, but it's good to know that the sample is running properly. Almost properly: the cup material is broken.

The scene I selected as starting point was `MovementISDKIntegration`, because it has the interesting feature of altering the input data to have the character bones follow some rules and constraints. While capturing this short clip, for example, in reality I'm completely closing my right hand in a fist, but the character hand adapts to a pose suitable to wrap the cup.

Not sure I'm going to use this, but it's interesting.

Now, let's see what the documentation said about this sample scene and the character rig.

While checking the documentation, I went back to the Unity-Movement repository and noticed that, just a few days ago, a new major release of the package (v5.0.0) has been released (my project currently includes v4.2.1).

This is a bit annoying: I was ready to start analysing the package code, but it doesn't make sense to analyse an outdated version. On the other side, if I update the package, I will almost surely need to repeat the steps I did last week to "clone" the needed elements from the Samples.

And to make things worse, to even try, I must also update the other Meta SDK packages, because this release depends on v63, and I have v62 in the project.

Boring! But it must be done. I really don't feel like doing it today, so I'm calling it a day a bit early.

2024-04-23 - Yet another SDK update

Surprise, surprise, I was on `v62` and `v64` is already available. I totally overlooked v63.

So, I'm going to switch the core SDK packages from `v62` to `v64`, and then I'm going to upgrade `com.meta.movement` from `v4.2.1` to `v5.0.1` - which was released... today! Glad I didn't install `v5.0.0` yesterday.

Let's upgrade everything and see if the project explodes.

So, my scene is broken as expected, and I assume it's because of the prefabs I duplicated last week, which should be re-duplicated or updated from outside the Unity editor.

There's something else which bothers me, tough: opening the sample scene I'm using as reference, `MovementISDKIntegration`, it looks like they introduced a problem with the hands of the robot character, which don't perfectly match the tracking.

Or is it because of something I did? It shouldn't be, but to be sure... let's test in an empty project.

I created an empty projects, imported the same SDK packages I'm using in the Particular Reality project, and tested the same scene.

The problem is still there - on the upside, the cup has the correct material.

This is quite annoying, because one would like to at least start from something working well before doing changes.

I verified that the problem only affects the robot character. If I open the `MovementHighFidelity` scene, the hands are correct.

I rolled back the `com.movement.meta` package to `4.2.1`, but keeping the `v64` upgrade.

Everything works again, which is nice: the `v64` upgrade doesn't break anything.

It's worth doing a commit just with the `v64` update, and then think about what to do with `com.meta.movement`.

As a side note, when I need to try out different versions of packages, my favourite way of doing is editing the `package.json` file directly. It's much faster than using the package manager, and using version control on the file I can quickly discard changes to rollback packages. Highly recommended.

So, the fact that a major release change breaks thing is pretty normal (that's usually the important difference between a major version upgrade and a minor version).

What options do I have?

A) upgrade to `

v5.0.1`, update my scene by re-cloning the sample prefabs, and ignore the hand tracking imperfection on the robot character I'm using for my testsB) stay on `

v4.2.1`, analyse that and keep developing my things as if the new version doesn't exist

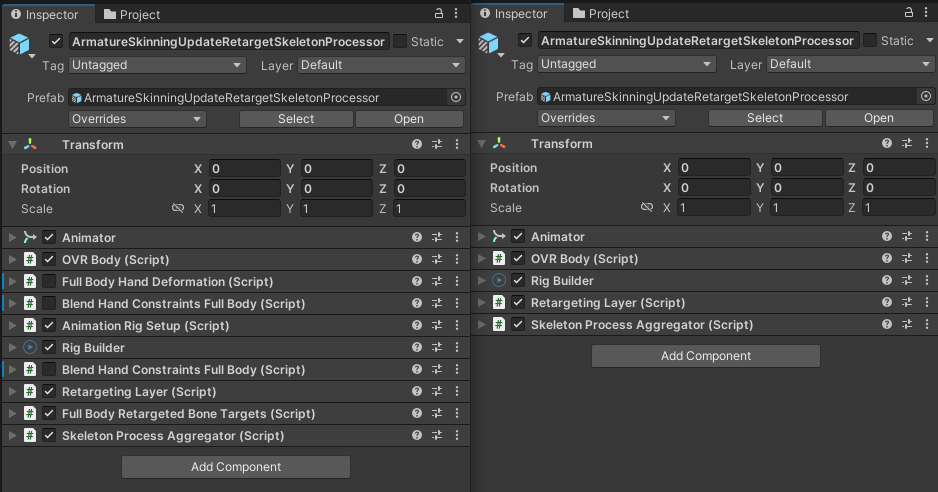

I was seriously considering option B, but then I started comparing the character rigs of `v4.2.1` and of `v5.0.1`, opening them side by side in two projects (the Particular Reality project rolled back on `v4.2.1`, the empty project from an hour ago on `v5.0.1`).

The setup in the new version is significantly simpler: it looks like the folks at Meta did some serious clean-up. This is very valuable to my eyes, so I definitely prefer upgrading.

I can live with the imperfect hands matching for a while, and with some luck, they're going to publish an update which fixes the issue before I even get to the point where I feel like I need to fix it myself.

So, let's update "my" prefabs and see if I manage to have my scene working on `v5.0.1`.

To only update what's needed, I compared (using WinMerge) the source files I duplicated from the samples last week with the same files in the new version of the package.

I found out that basically the only two files I need to update are

`

ArmatureSkinningUpdateRetargetSkeletonProcessor.prefab``

ArmatureSkinningUpdateRetarget.prefab`

So I'm copying the new versions of these files overwriting their "counterparts"

`

PRAvatar_SkinningUpdateRetargetSkeletonProcessor.prefab``

PRAvatar_SkinningUpdateRetarget.prefab`

...and I'm fixing their references to other cloned elements, using the `guid` replacement technique I discussed last week.

Ok, everything works again, and I'm now running `v64` and `v5.0.1` in my project.

I also introduced the hands displacement problem inherited from the samples, but that was expected.

Maybe tomorrow I'll be able to make some actual progress.

2024-04-24 - More visual debugging

Last week testing, when I used the "runtime gizmos", showed that the bones of the skinned mesh weren't properly rotated.

I wrote:

Everything looks working fine with the "skeleton" visualization because, I'm assuming, it doesn't really use the bones rotations, but just the joints positions, drawing lines between them.

After thinking some more, I think that assumption was incorrect, because I remembered that I can show the joints axis by setting on `PlayerAvatarDebugGizmos` an option inherited by `SkeletonDebugGizmos`.

And they are fine:

Good.

Now, let's give another look at everything and see if I can figure out why the skinned mesh animation is so broken.

While re-examining the scene, I noticed that the skinned character fetches data from an `OVRBody`, while the clip recorder saves data fetched from a `Body`. I forgot about this difference in the data flow.

Such script was attached to a node named `OVRBody`, which made things more confusing. Have I ever discussed my awful memory?

Anyway, I might have found the root of the problem. Fingers crossed. Let's recap and think about the body tracking data flow in the scene.

I discussed `Body` and `OVRBody` in my "deep dive" article.

Let's take the data flow diagram at the end of that article:

So, using a flowchart-like notation I'm making up on the spot, this is the current state of things:

Now, when I implemented `UpperBodyCharacterDataProviderBhv` last week, while fiddling with the data, I did actually somewhat revert the processing done in `FromOVRBodyDataSource`, but maybe I did something wrong there?

Last week I wrote:

I might hack around this, saving the data from the skinned mesh animation after the processing, but I don't really like that idea.

but in fact I'm already saving data that is "more processed" than it should be.

I'm saving the `Body DATA` into the clip, and not the "lower level" `OVRBody DATA`.

Basically, I could simplify the flow and avoid the "inversion" of the processing done by `FromOVRBodyDataSource` (that I'm currently doing in `UpperBodyCharacterDataProviderBhv`).

This is the plan:

I modified the data flow (partially: I didn't complete the "skeleton" path, which now is basically mirrored because I'm skipping the `FromOVRBodyDataSource` processing).

But... the `SkinnedMesh` behaves exactly as it did before. I guess my "inversion" of the `FromOVRBodyDataSource` processing was correct, after all.

So where's the problem?

Time for more visually aided debugging.

I'm going to do a test visualization based on the "runtime gizmos" we already used, but that uses the interfaces exposed by `OVRBody`, exactly like the skinned mesh rendering should be doing.

Here's my new debugging script:

using System;

using System.Collections.Generic;

using UnityEngine;

using Oculus.Interaction.Body.Input;

using BinaryCharm.ADT;

namespace BinaryCharm.ParticularReality.DebugManagement

{

public class DebugAnimPlayerBhv : MonoBehaviour {

private OVRSkeleton.IOVRSkeletonDataProvider m_rSkeletonDataProvider;

private Dictionary<BodyJointId, GameObject> m_rJointToGizmoMap =

new Dictionary<BodyJointId, GameObject>();

private void Start() {

initRuntimeGizmos();

}

private void Update() {

updateRuntimeGizmos();

}

private void initRuntimeGizmos() {

Action<BodyJointId> addRuntimeGizmo = (BodyJointId id) => {

m_rJointToGizmoMap.Add(

id,

DebugManagerBhv.i().InstantiateGizmo(

null, PosRot.DEFAULT, 0.05f, "RG_" + id.ToString()

)

);

};

addRuntimeGizmo(BodyJointId.Body_Root);

addRuntimeGizmo(BodyJointId.Body_Hips);

addRuntimeGizmo(BodyJointId.Body_Head);

addRuntimeGizmo(BodyJointId.Body_LeftHandPalm);

addRuntimeGizmo(BodyJointId.Body_RightHandPalm);

addRuntimeGizmo(BodyJointId.Body_LeftArmUpper);

addRuntimeGizmo(BodyJointId.Body_RightArmUpper);

m_rSkeletonDataProvider =

GetComponent<OVRSkeleton.IOVRSkeletonDataProvider>();

}

private void updateRuntimeGizmos() {

OVRSkeleton.SkeletonPoseData skeletonPoseData =

m_rSkeletonDataProvider.GetSkeletonPoseData();

if (!skeletonPoseData.IsDataValid) return;

foreach (var kv in m_rJointToGizmoMap) {

int iJointId = (int) kv.Key;

Vector3 vPos = skeletonPoseData.BoneTranslations[iJointId]

.FromFlippedZVector3f();

Quaternion qRot = skeletonPoseData.BoneRotations[iJointId]

.FromFlippedZQuatf();

kv.Value.transform.SetPositionAndRotation(vPos, qRot);

}

}

}

}It's pretty simple. I spawn in the scene a bunch of runtime gizmos thanks to an utility method in `DebugManagerBhv`, one for each "key joint" I want to see. Then, I update their transforms on the basis of the `SkeletonPoseData` fetched from a script implementing the `OVRSkeleton.IOVRSkeletonDataProvider` interface that is expected to be on the same node.

In terms of our data flow diagram, this new visualization is based on `OVRBody DATA` like the `SkinnedMesh` character, instead of using `Body DATA` like the `SkeletonDebugGizmos` visualization does.

I decided to only show a few "key" joints, but with bigger gizmos. Less clutter, and easier to focus on the critical joints (root, hips, shoulders, hands).

Let's see what comes up!

Well, this new debug visualization tells me two important things.

First, I can now be 100% sure that the joints data provided by `UpperBodyCharacterDataProviderBhv` is correct.

Applying `DebugAnimPlayerBhv` on both character rigs, the one using the live data from the headset and the one using data from the recorded clip, gives consistent results, properly positioning the runtime gizmos on both.

Notice the runtime gizmos on my hands, at the beginning of the video, and how the gizmos on the character on the right move consistently with the (mirrored) skeleton on the left.

Second, I can see the difference in the pose of a runtime gizmo and of the actual bone of the `SkinnedMeshRenderer`.

I compared, using the Scene View of the Unity editor, the hips bone pose (using the editor gizmo) and the pose as calculated by the clip player (shown using the "runtime gizmo"):

Look at the two gizmos: not only the orientations are completely different, but the `SkinnedMeshRenderer` bone doesn't rotate at all.

Actually, not a single bone rotates, coming back to my intuition of last week: I thought that the skeleton visualization worked because it only needed positions while the rotations where wrong.

Then, I realized that it was using rotations after all. But I wasn't sure the data was correct, because I had two data paths with different processing. So, I changed that and became sure I had the correct data in place, with this new debug visualization.

At this point, I'm pretty confident that the issue is in the SDK code that takes the joints data and actually adjusts the bones.

Hopefully tomorrow I'll finally find the root cause.

2024-04-25 - Finding and fixing the bug

After the analysis conducted yesterday, I surrender to the idea of looking at the SDK code of the scripts involved in the character animation.

On the main node, there are these two scripts:

`

RetargetingLayer``

SkeletonProcessAggregator`

My idea since the beginning has been that these script access the skeleton data by calling the `GetSkeletonPoseData()` of the `OVRSkeleton.IOVRSkeletonDataProvider` interface.

It's not obvious where this happens (inheritance, partial classes...), so I'm going to set a breakpoint in my implementation of such method, in `UpperBodyCharacterDataProviderBhv`, and step into the execution, hopefully understanding why the code sets the positions of the bones but not the rotations.

After stepping for a while without noticing anything that looked problematic, I saw something that triggered my internal alarms.

Let's see the `SkeletonPoseData` returned by the `GetSkeletonPoseData()`:

public struct SkeletonPoseData

{

public OVRPlugin.Posef RootPose { get; set; }

public float RootScale { get; set; }

public OVRPlugin.Quatf[] BoneRotations { get; set; }

public bool IsDataValid { get; set; }

public bool IsDataHighConfidence { get; set; }

public OVRPlugin.Vector3f[] BoneTranslations { get; set; }

public int SkeletonChangedCount { get; set; }

}To avoid some useless recomputations, at some points, the SDK code checks the `SkeletonChangedCount` field, and only acts if it changed.

But I don't remember setting this value in my implementation...

Ooops.

This was the code at the end of my `GetSkeletonPoseData()` method:

return new OVRSkeleton.SkeletonPoseData {

IsDataValid = true,

IsDataHighConfidence = true,

RootPose = rootPose,

RootScale = 1.0f,

BoneRotations = m_rBoneRotations,

BoneTranslations = m_rBoneTranslations

};Unfortunately, I had not set at all the `SkeletonChangedCount` value.

Let's fix it incrementing a counter at each call and returning such value into the `struct`.

++m_iChangedCount;

return new OVRSkeleton.SkeletonPoseData {

IsDataValid = true,

IsDataHighConfidence = true,

RootPose = rootPose,

RootScale = 1.0f,

BoneRotations = m_rBoneRotations,

BoneTranslations = m_rBoneTranslations,

SkeletonChangedCount = m_iChangedCount

};The horror for the stupidity of the error, but also, the joy in seeing everything finally working!

A constructor would have forced me to initialize all the needed fields, but in this case the language couldn't support me in "not forgetting" to initialize and use that field, and a small distraction ended up costing me quite a bit of time.

Well, it's late, but this needs a celebration video where I record a clip and use it to high five myself.

I posted the last seconds on Twitter etc, but only you, dear readers, get the full story and the full clip.

2024-04-26 - Clean-up Friday

The las couple days have been a bit "heavy" and the weekend is around the corner, so I'm going to take it lightly and don't start implementing new features.

I want to do a bit of cleanup, because my code of the last couple days was mostly for testing and finding out what was wrong, and I took some shortcuts on the way.

Then, I want to capture a clip I can use for a cool "screenshot Saturday" update tomorrow (the last clip of that kind that I published is from more than two months ago, yikes!).

I removed the clutter and committed my changes to the repository.

I went back to the data path using `Body` data and not `OVRBody`, as it was innocent.

I'm doing a little more processing this way, but it feels cleaner as I'm operating on higher level data not using the Oculus vector and quaternion types but the Unity ones.

I left the other data path commented out in the code, in case I need to go back to it.

Then, I did a few minor changes to be able to capture a nice clip.

Namely, I:

disabled the "skeleton" visualization unless debug mode active

added a gesture to show/hide the mocap UI

made the "playback" character invisible if no clip is playing

fixed a culling bug that made the body vfx disappear once in a while

Then, I pushed a build to the Quest and recorded a motion clip in which I played the part of an enemy attacking the player as soon as they get on the platform. Then, I enabled the Quest video recording and "acted" the other part of the interaction.

It's just a glimpse into a possible future, and not a real gameplay interaction, but it should be cool for a promotional clip. The whole "behind the scenes" truth is just for my elite audience of DevLog readers!

Another week is over. How's things? Not bad!

I finally have a decent set of features related to body tracking.

The process started with the introduction of the `SkinnedMesh` avatar for the player character in Week #14, and now I can use that kind of character to playback motion clips, too.

I can conveniently record and edit those motion clips directly from VR, which is great.

Even if I'm not 100% done, I don't see major roadblocks in proximity. What's missing? A couple of minor things I already mentioned in the past:

showing some "keyframe" references to be able to easily capture clips that can flow into each other

being able to tune the clip frequency so that I can use clips with different "quality" as needed

I decided to postpone the implementation of these features to the moment when I'm going to actually start capturing and using clips.

I'm going to capture clips to act as "visual tutorial", showing the player the gestures they have to perform, and I'm also going to use them to prototype the enemies animations (movement, attacks, defences).

So what am I going to do, if not this, next week?

That's going to be a surprise, and it might take a little longer than a week.

Two weeks, maybe three. See you then!