2024-09-16 - Avatar modes

In week 25, I did a lot of thinking and wrote down many ideas. Now it's time to work on making those ideas part of the game.

Before starting to actually write code, let's prepare a list of the things I need to do, at a surface level.

So, I need to:

improve significantly the player avatar management

make the special "mirror portal" between limbo and menu zone

make the "white flash" transition between limbo and menu zone

implement the dynamic "hint system" with particles pushing the player towards a specific spot, and showing what gestures to do

implement the navigation between contexts and the application flow shown by the FSM diagram at the end of the article

That's a lot of work, but as long as I manage to reasonably divide it into tasks to be faced one at a time, and put together at the end, it should be fine.

I'm going to start from the avatar.

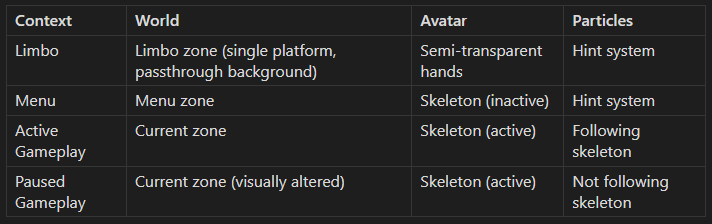

I think that I reached a good synthesis of what I need to do about the avatar in a table I showed last week:

Basically, the `Context` and `World` tell when a certain avatar/particles setup operates and that will come into play later.

Now, we have to work on the `Avatar` and `Particles` so that they can be in the different required configurations, each with its behaviour.

Even if they will ultimately work together, we should be able to work on the two subsystems separately.

I feel like we need a name to distinguish "these" particles from the others. Let's go with "smart particles", as they will actively do things and not just be a cosmetic or passive element of the game.

So, two separate subsystems, that will work together, because normally the smart particles will follow the avatar.

Let's start from the avatar management, that I already have implemented in a basic form, and that I will now refactor. The table indicates three possible avatar modes, so let's define an identifier for each mode, to keep things short and sweet:

Semi-transparent hands: `

limboHands`Skeleton (inactive): `

inactiveSkeleton`Skeleton (active): `

activeSkeleton`

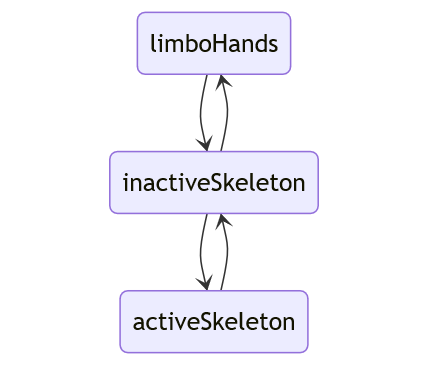

According to what I defined in week 25, the player can't go directly from gameplay to limbo, but must always go through the menu zone. This means that we don't need to define a direct transition between `limboHands` and `activeSkeleton`: the avatar must always go through `inactiveSkeleton`, like this:

The kind of handling I need to implement to take care of these state changes is easier than most things we've been dealing in the past weeks.

Why? I don't need to think about state persistence, possibility to rewind gameplay etc.

I'm basically dealing with a state element which is not part of the gameplay state, but of the application state. Something which is intrinsically transient and won't be stored in a savegame.

This doesn't mean that I will be totally sloppy, and I'm still going to define a simple FSM which supports non-instant transitions. That will come useful when I need to orchestrate the state change of different elements when implementing the global application flow.

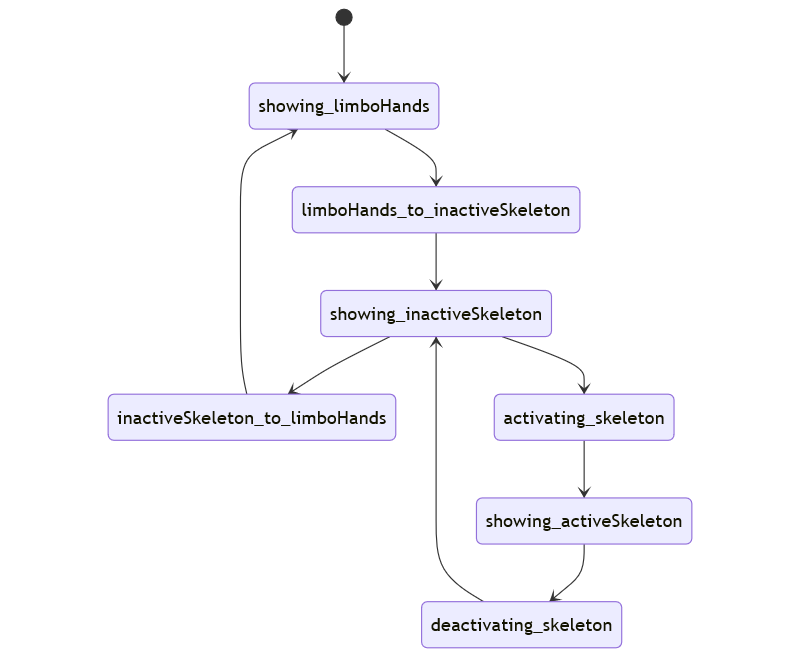

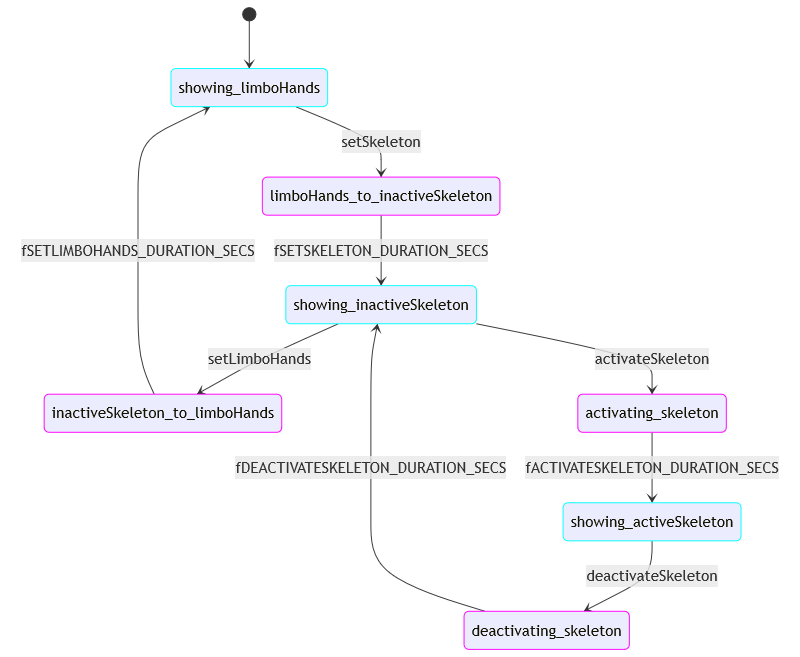

Let's complete the day with a really NOT interesting FSM diagram which makes the transition states explicit and asymmetric, so that by reading the FSM state is immediately obvious not only if a transition is happening, but also which way it's going.

This won't force me to be fancy in the presentation layer, but if I decide to be, the logic will be there already.

2024-09-17 - Avatar FSM implementation

Time to do some actual implementation work.

As first step, I renamed what I've been calling `PlayerAvatar` until today to `PlayerBody`, as it was pretty much a description of the head/hands and bones of the player body, as used not only to drive the avatar but in the motion clip capture and playback.

So it was not very appropriate anyway, and I can now use that same name in the right context: the avatar management.

I went and implemented the FSM I designed yesterday. As I'm doing recently, I also implemented a `TestPlayerAvatarBhv` to test the state changes via inspector.

There's a bit of boilerplate in the code, which bothers me a little, but I feel like the system is becoming quite robust. Everything worked correctly on the first try.

While implementing the FSM, I noticed that there was quite some repetition: basically, the states are of two kinds, the three "core" which represent the normal visualization of a certain mode, and the "transition" states which will be presented with some kind of animation between the modes.

The execution exits the "core" states when the right input signal comes, while the "transition" states automatically send to another state after a specific delay.

We're talking about very quick animations, and considering that they should be activated when navigating through contexts as previously explained, they shouldn't need to be interruptible or reversible, which keeps things simple.

Let's revisit the diagram - I recently learned how to apply colors to states in Mermaid diagrams, and hey, I'm going to make use of that.

I labelled the transitions so that they show the input signal needed to change state (on the arrows coming out of the cyan states, the "core" ones), or the constant representing the duration of the transition (on the arrows coming out of the magenta states).

In a resource constrained environment, I would implement this FSM logic differently, in a more compact way that just uses two `currCoreState`and `nextCoreState` variables, and a flag and a timestamp to handle the transition logic.

Instead, I'm sticking to the approach used in the other FSM implementation shown so far.

This doesn't mean that I can't avoid repetition in the code: I just need to define a couple of helper methods to generate the two kinds of state function I need, but with different parameters:

private static Func<IPlayerAvatarFSMView, EState?> getBaseStateFunc(

Dictionary<EInput, EState> rTransitionsMap

) {

return (IPlayerAvatarFSMView rExec) => {

var rData = rExec.getExecData().getData();

if (rExec.isInputAvailable()) {

FSMInput<EInput> input = rExec.fetchInput();

EState eState;

if (rTransitionsMap.TryGetValue(input.m_id, out eState)) {

return eState;

}

}

return null;

};

}

private static Func<IPlayerAvatarFSMView, EState?> getAnimStateFunc(

float fDurationSecs,

EState eDstState

) {

return (IPlayerAvatarFSMView rExec) => {

var rData = rExec.getExecData().getData();

float fProgress =

rExec.getExecData().getCurrStateElapsedTime() /

fDurationSecs;

rData.m_fAnimProgress01 = Mathf.Clamp01(fProgress);

if (rData.m_fAnimProgress01 == 1f) {

return eDstState;

}

return null;

};

}Then, I can simply define the state functions for the whole FSM in a very compact manner.

It would be cool (and not that hard) to automatically generate this definition from the Mermaid diagram text... but not this time.

private static

Dictionary<EState, Func<IPlayerAvatarFSMView, EState?>> rStates =

new Dictionary<EState, Func<IPlayerAvatarFSMView, EState?>>() {

{

EState.showing_limboHands,

getBaseStateFunc(

new Dictionary<EInput, EState>() {

{

EInput.setSkeleton,

EState.limboHands_to_inactiveSkeleton

}

}

)

},

{

EState.limboHands_to_inactiveSkeleton,

getAnimStateFunc(

fSETSKELETON_DURATION_SECS,

EState.showing_inactiveSkeleton

)

},

{

EState.showing_inactiveSkeleton,

getBaseStateFunc(

new Dictionary<EInput, EState>() {

{

EInput.activateSkeleton,

EState.activating_skeleton

},

{

EInput.setLimboHands,

EState.inactiveSkeleton_to_limboHands

}

}

)

},

{

EState.activating_skeleton,

getAnimStateFunc(

fACTIVATESKELETON_DURATION_SECS,

EState.showing_activeSkeleton

)

},

{

EState.showing_activeSkeleton,

getBaseStateFunc(

new Dictionary<EInput, EState>() {

{

EInput.deactivateSkeleton,

EState.deactivating_skeleton

}

}

)

},

{

EState.deactivating_skeleton,

getAnimStateFunc(

fDEACTIVATESKELETON_DURATION_SECS,

EState.showing_inactiveSkeleton

)

},

{

EState.inactiveSkeleton_to_limboHands,

getAnimStateFunc(

fSETLIMBOHANDS_DURATION_SECS,

EState.showing_limboHands

)

}

};2024-09-18 - Avatar presentation layer

Now that I have the avatar mode logic in place, and a way to activate it through the inspector, it's time to work on the presentation layer.

For the "limbo hands" I'm just going to use the default hands offered by the SDK.

For the "skeleton", I'm going to use the robot character from the Meta samples I started with, changing something on the material to show the activated/non activated modes.

I did a few changes to the scene and added two simple scripts controlling the two elements which come into play: `LimboHandsBhv` and `SkeletonAvatarBhv`, each connected to the relevant nodes.

Then, I implemented the "state presenter" behaviour for the player avatar, which reads from its state manager and configures the nodes which take care of the presentation.

The visibility change of the hands and of the skeleton is instant for now, even if I pass in a 0-1 value to indicate the progress when in a transition state. In the case of the skeleton activation/deactivation, I use such value for a simple color interpolation.

At some point, when I'm going to have the actual, custom 3D model for the player avatar, I will do something fancier.

Everything is quite simple and tidy:

`

LimboHandsBhv` controls the visibility of the semi transparent hands`

SkeletonAvatarBhv` controls the visibility and "activation" of the skeleton body`

PlayerAvatarBhv` reads the avatar state data and uses it to properly configure `LimboHandsBhv` and `SkeletonAvatarBhv`

Here's a short clip of me checking that everything works properly using the `TestPlayerAvatarBhv`.

Notice the small jump in the hand position when switching between hands and skeleton. You might remember about the mismatch that got introduced when updating the `com.meta.movement` package to version `5.0.1`. I checked on GitHub and the current release is `6.0.0`. I wonder if they fixed it? It might be worth checking, hoping there are no big breaking changes (major version bump!).

Anyway, it's going to take a while to have the avatar mode switching normally working in-game as specified by the design: we need to build some other pieces of the puzzle first.

2024-09-19 - Mirrored body

Let's focus on one small part of the design work done in week 25:

With pretty much nothing else to do, the player will have to walk into the portal, or at least reach into it with their hands. When he "touches" the reflected avatar, in a white flash, I will teleport the player to the "menu" zone, and they will find themselves embodying the avatar which was "on the other side" one moment before that.

So, how do I implement this? Let's see what do I have, and what's missing.

I have

a working portal system which allows me to "see" into the destination zone

a way to drive characters from body tracking data (live, or saved)

I don't have

logic to detect the conditions to activate this special teleport (the player is not walking into a portal but is just "touching" it)

the "white flash"

a way to "mirror" the body tracking data

I can place a copy of the skeleton avatar on the destination platform (that gets shown in the portal on the limbo platform), but if I don't flip the avatar properly, the illusion won't work.

Luckily, I remember the mirroring being part of one of the samples. Time to revisit them and see if they can save me some work.

I checked the sample I remembered about, which is in the `MovementRetargeting` scene of the `com.meta.movement`package.

The implementation involves:

a `

MirrorTransformation` script, which lets you define mirror normal and plane offset, and links to a `_transformToMirror` which acts as parent transform of the "mirrored" objectsa `

LateMirrorerdObject` script to be attached to a "mirrored" object and linked to the "original" one, which lets you define a list of `MirroredTransformPair`, where each pair links an "original transform" (of the object to mirror) to a "mirrored transform" (of the mirrored object), and copies the original transform to the mirrored transform

In the case of the player character, in the scene there's another instance of the character model used for the player, under the node set as `_transformToMirror`, and on its `Skeleton` node there's the `LateMirroredObject` which links it to the player character model.

I should be able to replicate this setup pretty easily. The only problem is that in the `limboHands` state I wouldn't have an "original" body to feed to `LateMirroredObject`, because the skeleton avatar is not actively tracking in that state. Maybe I can change it so that is active but not rendered? Let's try.

Ok, I got it working!

I did what I anticipated, with the only detail that "active but not rendered" didn't mean turning off the `SkinnedMeshRenderer`, which needs to be active to properly update the bones. What I had to do, instead, was keeping it active but drawing it with the "null shader" shown on 2024-02-14.

I didn't have to touch anything except the implementation of a `setShown()` method I had defined in `SkeletonAvatarBhv`.

There's still a few things to get done before considering this "mirroring" feature done, like placing the "mirror" transform relatively to the limbo platform (for now I just placed it at zero, which works only if there's no play area offset manually).

I'll get back to it soon.

2024-09-20 - White flash transition

Let's proceed in building the elements we need. Today I'm going to tackle the effect to be used when transitioning from limbo hands (in the limbo zone) to the skeleton avatar (in the menu zone).

I'll follow my usual implementation principle, which is doing things in a basic way but with a sensible interface, and maybe replace them in the future with something better.

I want to be able to tune the transition timing and have a "safe" interval where I know that the player can't see anything, allowing me to change things around them without breaking the immersion.

The most basic thing that comes to mind is a full screen white flash that I can control via code setting the transparency level.

So, at the end, I'm going to do something like:

when ready, fade to fully opaque white in 0.2 sec

when fully opaque, set the avatar mode / teleport to the menu zone etc

finally, fade to fully transparent in 0.1 sec

So, how to implement the white flash?

The most crude way, which I've used sometimes in the past adapting a classic non-VR approach, is sticking a quad drawn with a shader supporting transparency in front of the camera, and set its color.

After a brief search, I noticed that there's an `OVRScreenFade` script offered by the SDK that basically does that, creating the geometry via code, which is nice.

Let's try to use it...

I want control over the transparency level and timing of the fade, so I didn't use the `FadeIn` / `FadeOut` methods offered by `OVRScreenFade.cs`.

Actually, I made a super-thin wrapper over it and I'm going to use that whenever I need the functionality.

using UnityEngine;

using BinaryCharm.Behaviours;

namespace BinaryCharm.ParticularReality.Presentation {

public class ScreenFadeManagerBhv : ManagerBhv<ScreenFadeManagerBhv> {

private void Awake() {

registerInstance(this);

}

public void setOpacityLevel(float f01) {

OVRScreenFade.instance.SetExplicitFade(f01);

}

public void setFadeColor(Color c) {

OVRScreenFade.instance.fadeColor = c;

}

}

}In the `PlayerAvatarBhv` script, which "orchestrates" the other components related to the avatar presentation, I called `setOpacityLevel` remapping the progress level of the transition states `limboHands_to_inactiveSkeleton` and `inactiveSkeleton_to_limboHands`.

It sounds like a good moment to throw in a cool little trick: to remap a 0-1 value to a value which instead goes up and down (so that we can have maximum opacity in the middle, and have a fade out/fade in) I did:

// fAnim01 is the progress level of the transition

fFadeOpacity = 1f - Mathf.Abs(2f * fAnim01 - 1f);

ScreenFadeManagerBhv.i().setOpacityLevel(fFadeOpacity);An equivalent formulation, using a conditional, would have been:

if (fAnim01 < 0.5f) {

fFadeOpacity = fAnim01 * 2f;

} else {

fFadeOpacity = (1f - fAnim01) * 2f;

}...that could be written in a more compact way using the ternary operator:

fFadeOpacity = (fAnim01 < 0.5f ? fAnim01 : 1f - fAnim01) * 2f;Using the absolute value is a bit more opaque, but feels cleaner and avoids branching.

Not that would matter here, but it's the kind of thing which is nice to know and can be much more important in the context of shader code. Still, when I do this kind of thing, I try to leave as a comment the equivalent, explicit formulation.

Another week of (part-time) work on Particular Reality is done. Let's complete this DevLog entry with a short clip:

At some point I will probably add an easing function to the value, but even in the current state, I feel it's adequate.

Next week I'll continue the work, taking care of the "touching mirror detection" and, hopefully, of the "mirror portal" teleportation, so that we can see it all come together.